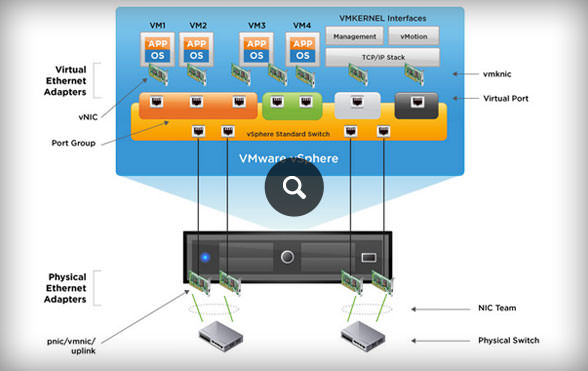

The configuration screen using vSphere Web Client is as follows. Remember no LACP.Īny solution? with ESXi 4.x, you need to have third party solution such as Cisco 1000v or IBM 5000v to use LACP. Multipathing Configuration can be done through command line or through vSphere Web Client. I am trying to get NIC teaming to work for some of my virtual servers for increased bandwidth, especially during backups when multiple computers on the network. How about traffic is exceeding maximum bandwidth of the link? Result will be packet lost. Doesn’t matter how many NICs are teaming together. So, if you have single VM and communicating only single destination, all traffic will be staying a NIC all the time.

In previous releases, this was not something you could easily do and it was nice to see this feature get implemented in a new ESXCLI command that will be available as. The string name of a domain to add to the list of search domains. The ability to clear the ARP cache/table for an ESXi host is feature that has been requested from customers from time to time and usually for diagnosing network related issues. Add a search domain to the list of domains to be searched when trying to resolve an host name on the ESXi host. – By setting up "Route based on IP hash", ESXi will choose a NIC among teaming member by using XOR algorithm with source IP and destination IP.Ī single source IP to a single destination IP = Single NIC will be used all the timeĪ single source IP to many destination IPs = most likely single NIC will be used all the timeĪ many source IPs to many destination IPs = all NICs will be utilized. Connection type : ip, tcp, udp, all -help. – First of all, load-balancing means spead traffic on given or avaiable NICs(Network Interface Card), but it doesn’t mean equal amount of traffic is distributed to all participated NICs. From before 2 weeks we have issue when we start vmotion between two. Hello, In our cluster we have 8x Lenovo SR650 with Intel x722 10 GBps network cards connected to Cisco nexus 90 and vmware ESXi 6.7 U3 with the latest build, in the cluster are more the 300 VMs and also Cisco ISE machines. – LACP only works with IP Hash load balancing and Link Status Network failover detection and 32GB of memory, the cluster has an aggregate 256GHz of computing power and 256GB of memory available for running virtual machines. ESXi 6.7 U3 random VMs are losing network. – LACP between two nested ESXi hosts is impossible – LACP settings do not exist in host profiles – vSphere supports only one LACP group per distributed switch and only one LACP group per host

LACP will be supported on ESXi vSphere 5.1(thru DVS) with below restriction

ESXi vSphere 4.x is not support LACP, so use static bond method on a switch side.Įx) In Cisco case : "channel-group 10 mode on" under interface configuration mode.ģ. A switch is linked to ESXi host should be switch grade, but not hub. I have been researching and googling to clarify how VMWare NIC teaming is behaving load-balancing traffic between ESXi host and switch.ġ.